About CSC411/2515

This course serves as a broad introduction to machine learning and data mining. We will cover the fundamentals of supervised and unsupervised learning. We will focus on neural networks, policy gradient methods in reinforcement learning. We will use the Python NumPy/SciPy stack. Students should be comfortable with calculus, probability, and linear algebra.

Teaching Team

| Instructor | Section | Office Hour | ||

|---|---|---|---|---|

| Michael Guerzhoy | Th6-9 (SF1101) | M6-7, W6-7 (BA3219) | guerzhoy [at] cs.toronto.edu | |

| Lisa Zhang | T1-3, Th2-3 (RW117) | Th11-12, 3:30-4:30 (BA3219) | lczhang [at] cs.toronto.edu | |

When emailing instructors, please include "CSC411" in the subject.

Please ask questions on Piazza if they are relevant to everyone.

TA office hours are listed here.

Study Guide

Tentative Schedule

| Day | Night | Content | Notes/Reading | Deadlines | |

|---|---|---|---|---|---|

| Review of Probability | Deep Learning 3.1-3.9.3 | Math Background Problem Set

Complete ASAP | |||

| Review of Linear Algebra | Deep Learning 2.1-2.6 | ||||

| Week 1 | Jan 4 | Jan 4 | Welcome; K-Nearest Neighbours | Reading: CIML 3.1-3.3 Video: A No Free Lunch theorem | |

| Jan 9 | Jan 4 | Linear Regression | Reading: Roger Grosse's Notes on Linear Regression Reading: Alpaydin Ch. 4.6 Just for fun: why don't we try to look for all the minima/the global minimum of the cost function? Because it's an NP-hard task: if we could find global minima of arbitrary functions, we could also solve any combinatorial optimization problems. The objective functions that correspond to combintorial optimization problems often will look "peaky:" exactly the kind of functions that are intuitively difficult to optimize. | ||

| Jan 9 | Jan 4 | ||||

| Week 2 | Jan 11 | Jan 11 | Numpy Demo:

html

ipynb

Numpy Images: html ipynb 3D Plots (contour plots, etc): html ipynb Gradient Descent (1D): html ipynb Gradient Descent (2D): html ipynb Linear Regression: html ipynb | What is the direction of steepest ascent on the point (x, y, z) on a surface plot? Solution (Video: Part 1, Part 2.) Video: 3Blue1Brown, Gradient Descent. | |

| Jan 16 | Jan 11 | Multiple Linear Regression Linear Classification (Logistic Regression) Maximum Likelihood Bayesian Inference | Reading: Andrew Ng's Notes on Logistic Regression Reading: Andrew Ng, CS229 notes Reading: Alpaydin, Ch. 4.1-4.6 | ||

| Jan 16 | Jan 11 | ||||

| Week 3 | Jan 18 | Jan 18 | Bayesian inference and regularization (updated) Unicorns Bayesian inference: html ipynb Overfitting in linear regression: html ipynb | Videos: Unicorns and Bayesian Inference, Why I don't believe in large coefficients, Why L1 regularization drives some coefficients to 0 Reading: Alpaydin, Ch. 14.1-14.3.1 | |

| Jan 22 | Jan 18 | Neural Networks | Reading: Deep Learning, Chapter 6. CS231n notes, Neural Networks 1. Start reading CS231n notes, Backpropagation. Videos on computation for neural networks: Forward propagation setup, Forward Propagation Vectorization, Backprop specific weight, Backprop speicif weight pt 2, Backprop generic weight, Backprop generic weight: vectorization. Optional video: 3Blue1Brown, But what *is* a Neural Network?, Gradient Descent, What is Backpropagation really doing? Just for fun: Formant Frequencies Just for fun: Andrej Karpathy, Yes you should understand Backprop | ||

| Jan 22 | Jan 18 | ||||

| Week 4 | Jan 25 | Jan 25 | PyTorch Basics ipynb, html; Maximum Likelihood with PyTorch (ipynb, html) ; Neural Networks in PyTorch, low-level programming (ipynb, html) and high-level programming (ipynb, html); If there is time, Justin Johnson's Dynamic Net (ipynb, html) |

Reading: PyTorch with Examples, Just for fun: Who invented reverse-mode differentiation? | Project #1 due Jan 29th |

| Jan 30 | Jan 25 | Neural Networks, continued. Neural Networks Optimization, Activation functions, multiclass classification with maximum likelihood | Reading: Deep Learning, Ch. 7-8. Just for fun: Brains, Sex and Machine Learning, Prof. Geoffrey Hinton's talk on Dropout (also see the paper) | ||

| Jan. 30 | Jan. 25 | ||||

| Week 5 | Feb 1 | Feb 1 | Reading: Deep Learning, Ch. 9, CS231n notes on ConvNets Video: Guided Backprop: idea (without the computational part) Just for fun: the Hubel and Wiesel experiment Just for fun: Andrej Karpathy, What I learned from competing against a ConvNet on ImageNet. | Project #1 bonus due Feb 5th | |

| Feb 6 | Feb 1 | ||||

| Feb 6 | Feb 1 | Reading: CIML Ch. 8, Bishop 4.2.1-4.2.3, Andrew Ng, Generative Learning Algorithms | |||

| Week 6 | Feb 8 | Feb 8 | Gaussian classifiers, Multivariate Gaussains (ipynb), Mixtures of Gaussians and k-Means | Reading: Andrew Ng, Generative Learning Algorithms, Andrew Ng, Mixtures of Gaussians and the EM Algorithm. A fairly comprehensive introdoction to multivariate Gaussians (more than you need for the class): Chuong B. Do, The Multivariate Gaussian Distribution. Reading: Alpaydin, 7.1-7.6 Videos: NB model setup, P(x), EM for the NB model. Just for fun: Radford Neal and Geoffrey Hinton, A View of the EM Algorithm that Justifies Incremental, Sparse, and other Variants -- more on maximimizing the likelihood of the data using the EM algorithm (optional and advanced material). | |

| Feb 13 | Feb 8 | ||||

| Feb 13 | Feb 8 | ||||

| Week 7 | Feb 15 | Feb 15 | EM Tutorial: Gaussians, Binomial. | Reading: CIML Ch. 15, Bishop Ch. 12.1. Reading: Alpaydin, Ch. 6.1-6.3 Just for fun: average faces are more attractive, but not the most attractive. Francis Galton computed average faces over a 100 years ago pixelwise (by using projectors), just like we are doing for centering data before performing PCA. | Project #2 due Feb 23rd Grad Project Proposal due Feb 28th |

| Reading Week | Feb 27 | Feb 15 | |||

| Feb 27 | Feb 15 | ||||

| Week 8 | Mar 1 | Mar 1 | Review Tutorial (8pm-9pm for evening section) |

Reading: Alpaydin Ch. 9, CIML Ch. 1. Just for fun: For more on information theory, see David MacKay, Information Theory, Inference, and Learning Algorithms or Richard Feynman, Feynman Lectures on Computation, Ch. 4. | Midterm Mar 2nd 6pm-8pm EX320:A-Lin EX300:Liu-Z Midterm| Solution |

| Mar 6 | Mar 1 | ||||

| Mar 6 | Mar 1 | ||||

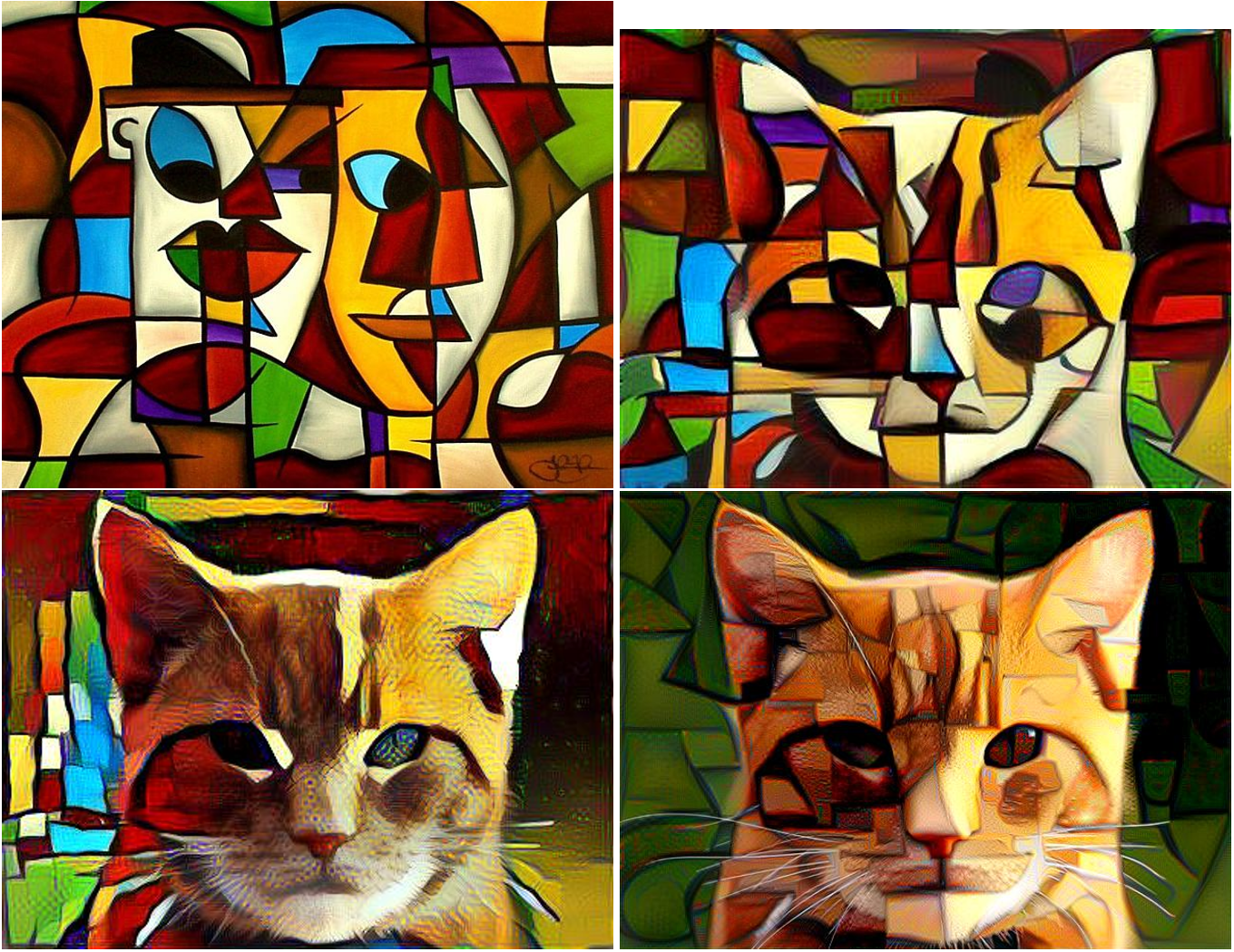

| Week 9 | Mar 8 | Mar 8 | Neural Style Transfer, Deep Dream (evening section only) Evolution Strategies for RL (evening section only) | Midterm solutions. Video solutions for Q6: data likelihood, the E-step, the M-step. Extra optional material: Preview: MLE for Poisson, deriving the E-step and the M-step by maximizing the expected log-likelihood. Reading: Ch. 1 and Ch. 13.1-13.3 of Sutton and Barto, Reinforcement Learning: An Introduction (2nd ed.) Reading: Alpaydin, 18.1-18.4 (for general background) Just for fun: Deep Dream images, Deep Dream grocery trip Just for fun: Reinforcement Learning for Atari Just for fun: A very fun paper on learning to play Super Mario from the SIGBOVIK conference (link to pdf). Just for fun: the OpenAI blog post on Evolution Strategies in RL Video of the Bipedal Walker learning to walk | |

| Mar 13 | Mar 8 | ||||

| Mar 13 | Mar 8 | ||||

| Week 10 | Mar 15 | Mar 15 | Reinforcement Learning tutorial | Reading: Alpaydin, Ch. 13.1-13.5 | Project #3 due Mar 19th |

| Mar 20 | Mar 15 | SVMs and Kernels | |||

| Mar 20 | Mar 15 | ||||

| Week 11 | Mar 22 | Mar 22 | Review of the Policy Gradients starter code for P4 | Reading: Alpaydin, Ch. 17.1-17.2, 17.6, 17.7 (?) | |

| Mar 27 | Mar 22 | ||||

| Mar 27 | Mar 22 | ||||

| Week 12 | Mar 29 | Mar 29 | Review Tutorial | Project #4 due Apr 2 | |

| Apr 3 | Mar 29 | Overview: successes in supervised learning. Overview: unsupervised learning | Suggested reading: Ian Goodfellow, Generative Adversarial Networks tutorial at NIPS 2016 (skim for applications and the description of GANs). | ||

| Apr 3 | Mar 29 | ||||

Final Examination (CSC411) | April 2018 exam timetable | ||||

| CSC2515 Project | due Apr. 26 | ||||